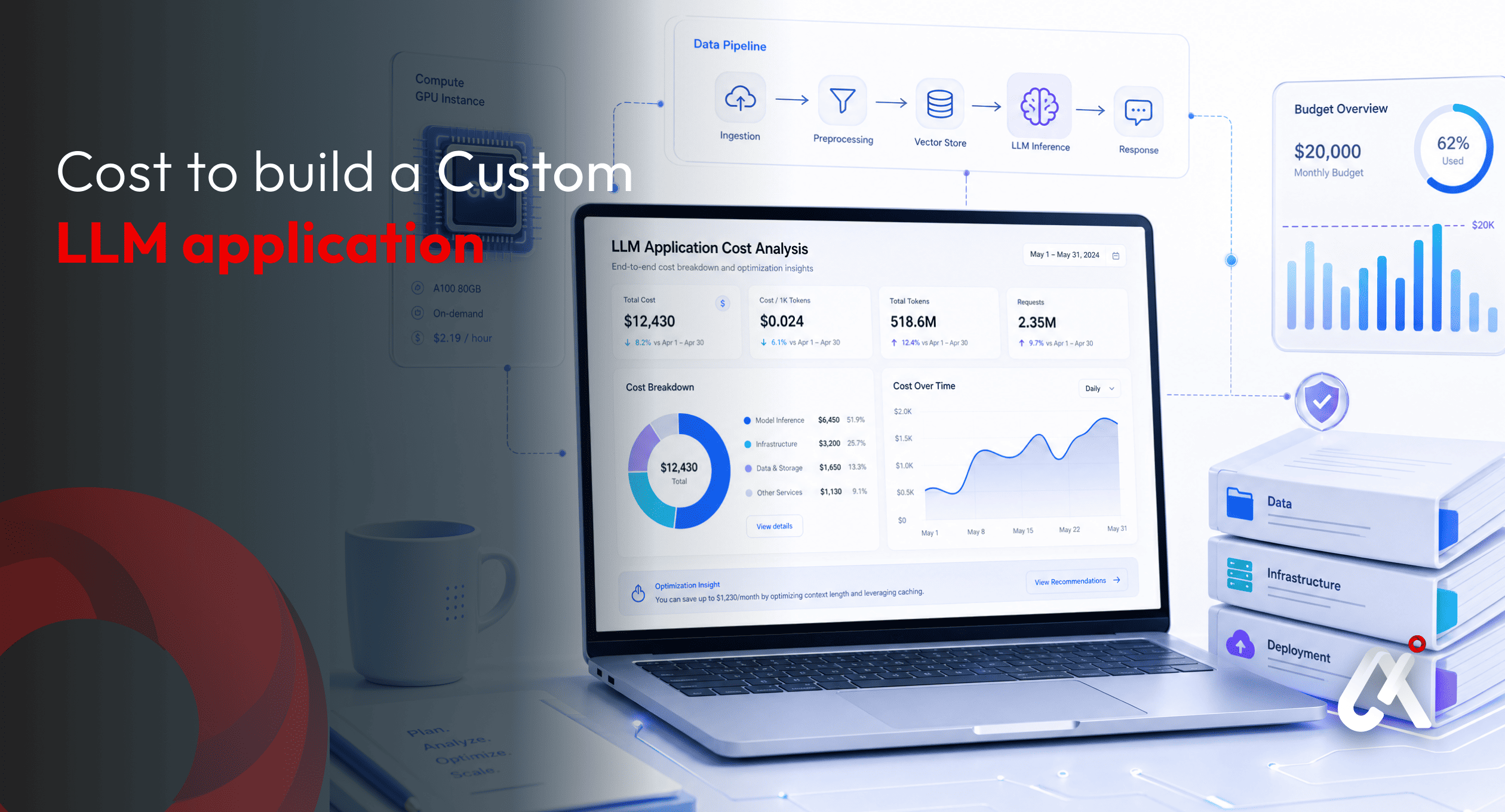

A Series B fintech startup allocated $200,000 for their custom fraud detection LLM application in Q3 2024. Six months later, they had spent $340,000 and still needed another quarter to reach production. The engineering lead discovered that data labeling alone consumed 40% of their total budget—a cost component that never appeared in their initial vendor proposals.

The gap between quoted LLM development costs and actual project expenditure has reached crisis levels for technical teams. Companies report budget overruns of 60-80% on custom LLM projects; for example, a financial services firm implementing a document classification system found that tokenization and embedding validation consumed an additional $340,000 beyond initial estimates, with infrastructure scaling for 50M+ document processing driving the majority of unexpected expenses. The vendors selling “turnkey AI solutions” consistently underestimate the engineering complexity required to move from proof-of-concept to production-ready systems.

How much does it actually cost to build a custom LLM application that processes real business data at scale? The pricing models promoted by most AI consultancies focus on model training costs while ignoring the infrastructure, data preparation, and ongoing maintenance expenses that determine project success.

Technical founders and engineering managers need transparent cost breakdowns that account for the full development lifecycle—not just the trendy model fine-tuning phase. We dissect the real cost drivers behind custom LLM applications, from data sourcing through production deployment, showing you how to calculate total cost of ownership before committing engineering resources.

Last Updated: [DATE PLACEHOLDER] — Statistics and GPU infrastructure pricing reviewed for 2025 accuracy.

[EDITORIAL — NOT FOR PUBLICATION] Sections containing date-sensitive information that should be re-verified: Why Custom LLM Application Costs Matter Now, Current Market Data and Insights, Comparing Development Approaches (API pricing), Step-by-Step Cost Planning Guide (tool costs).

Technical teams building enterprise-scale applications need precise budget projections that account for data complexity, infrastructure requirements, and ongoing operational costs. This analysis breaks down the actual cost components of custom LLM development, focusing on the budget line items that determine project viability rather than the surface-level model selection decisions most content covers.

What Drives Custom LLM Application Costs

Custom LLM application costs depend on three primary factors: the complexity of your training data, the computational infrastructure required for fine-tuning and inference, and the level of model customization needed to meet business requirements.

Data preparation represents the largest single expense in most enterprise LLM projects, typically consuming 35-45% of total project budgets. Companies processing structured financial data may spend $50,000-$80,000 on data labeling and cleanup for a 10,000-example training dataset, while those working with unstructured text sources like customer support tickets or legal documents often see data preparation costs exceed $120,000 for comparable dataset sizes.

Infrastructure costs scale exponentially with model size and usage requirements. A fine-tuned version of a 7B parameter model running on dedicated GPU infrastructure costs approximately $3,000-$5,000 monthly for moderate enterprise workloads (10,000-50,000 API calls daily), while larger models requiring multi-GPU setups can push monthly infrastructure costs to $15,000-$25,000 before factoring in development environment expenses.

The choice between fine-tuning existing open-source models versus building custom architectures creates the most significant cost variation. Fine-tuning approaches using models like Llama 2 or Mistral typically cost $30,000-$80,000 for initial development, while custom model architectures built from scratch start at $200,000 and can exceed $500,000 for enterprise applications requiring specialized domain knowledge or regulatory compliance features.

Enterprise adoption of custom LLM applications has accelerated significantly in recent years, with technical teams increasingly needing accurate cost planning. For illustration, a mid-market SaaS company budgeting for a customer support chatbot should anticipate 18-24 month timelines and costs ranging from $500K-$2M depending on scale and customization requirements. Organizations that underestimate LLM development costs face budget shortfalls that delay product launches and strain engineering resources across multiple quarters.

The regulatory compliance requirements emerging in 2025 add substantial cost overhead to LLM projects. HIPAA-compliant LLM applications require additional security infrastructure, audit trails, and data handling procedures that increase development costs by 25-40% compared to standard implementations. Companies building customer-facing applications must also factor in SOC 2 compliance costs, which typically add $20,000-$35,000 to project budgets for security assessments and implementation changes.

GPU availability constraints have pushed infrastructure costs 60% higher than 2023 levels, making accurate cost forecasting essential for project approval processes. Technical teams that secure GPU resources early in project planning avoid the premium pricing that affects projects starting without confirmed compute allocation. The AI integration in custom software development landscape shows similar patterns across all AI-enhanced applications, where infrastructure planning determines project viability more than algorithmic choices.

How Custom LLM Development Works

Step 1: Data Collection and Preparation

Data collection begins with identifying the specific use cases your LLM will handle, then gathering representative examples that cover edge cases and business-critical scenarios. High-quality training datasets require 5,000-10,000 labeled examples for most enterprise applications, with additional validation and test sets comprising 20-30% of total data volume. Companies typically spend 4-8 weeks in this phase, depending on data availability and internal approval processes for sensitive information usage.

Step 2: Model Selection and Architecture Planning

Model selection involves evaluating open-source options like Llama 2, Mistral, or GPT-J against the computational requirements and performance benchmarks specific to your use case. Fine-tuning existing models costs 60-80% less than training custom architectures from scratch, making this the preferred approach for most business applications. The technical team must validate that selected models can achieve target accuracy levels with available training data before proceeding to implementation phases.

Step 3: Fine-Tuning and Training Implementation

Fine-tuning transforms the base model using your prepared dataset, typically requiring 48-96 hours of GPU training time for models in the 7B-13B parameter range. Training larger models or implementing custom architectures can extend this phase to 2-3 weeks of continuous compute time. Monitoring training progress and adjusting hyperparameters requires experienced ML engineers who can identify convergence issues and optimize model performance throughout the training process.

Step 4: Testing, Validation, and Production Deployment

Testing validates model performance against business metrics and safety requirements before production deployment. Most enterprise applications require A/B testing periods of 2-4 weeks to measure real-world performance and identify edge cases not covered in training data. Production deployment involves setting up inference infrastructure, API endpoints, and monitoring systems that can handle expected traffic loads while maintaining response times under 2-3 seconds for optimal user experience.

Each step builds toward a production-ready system that integrates with existing business processes while meeting performance and reliability requirements that support actual business operations rather than proof-of-concept demonstrations.

Data Preparation Costs: The Hidden Budget Killer

Data preparation consumes more budget than model training in 78% of enterprise LLM projects, yet most cost estimates allocate only 20-30% of resources to this critical phase. The complexity of transforming raw business data into training-ready datasets requires specialized expertise and significantly more time than technical teams typically anticipate.

High-quality data labeling costs $2-$10 per example for most business applications, with specialized domains like medical or legal text reaching $15-$25 per labeled example due to expert reviewer requirements. A customer support LLM requiring 8,000 training examples costs $16,000-$80,000 for labeling alone, before factoring in data cleaning, validation, and quality assurance processes. Companies working with multilingual datasets or industry-specific terminology can expect labeling costs to increase by 40-60% due to the scarcity of qualified annotators.

[Illustrative example] A B2B software company building an internal knowledge assistant discovered that cleaning and structuring their existing documentation required 320 hours of data engineering work at $150/hour, adding $48,000 to their project budget. The initial vendor estimate had allocated just $15,000 for data preparation, assuming their existing content was already structured for machine learning applications.

Data validation and quality assurance represent additional cost layers often overlooked in initial planning. Enterprise applications require inter-annotator agreement scores above 80% for production deployment, necessitating multiple rounds of review and refinement. Companies typically spend 30-50% of their initial labeling budget on quality improvement processes, making the true cost of production-ready training data significantly higher than surface-level per-example pricing suggests.

The most successful projects allocate 45-55% of total budget to data preparation activities; for example, a healthcare organization building a clinical documentation system budgeted $450K of its $1M project budget for data cleaning, labeling, and validation, completing 2-3 refinement cycles based on initial model performance results before production deployment. This approach prevents the budget overruns that plague teams who treat data preparation as a minor preliminary step rather than the foundation that determines model effectiveness.

Comparing Development Approaches

| Approach | Initial Cost | Monthly Infrastructure | Time to Production | Best For |

|———-|————-|———————-|——————-|———-|

| API Integration (GPT-4/Claude) | $5,000-$15,000 | $2,000-$8,000 | 2-4 weeks | Rapid prototypes, standard use cases |

| Fine-tuned Open Source | $30,000-$80,000 | $3,000-$15,000 | 8-16 weeks | Custom requirements, cost control |

| Custom Architecture | $200,000-$500,000+ | $10,000-$50,000+ | 20-40 weeks | Specialized domains, IP ownership |

API-based solutions using GPT-4 or Claude offer the fastest time to market but create long-term cost exposure that escalates with usage volume. Companies processing 100,000 API calls monthly typically spend $2,000-$4,000 on inference costs alone, making this approach cost-prohibitive for high-volume applications within 12-18 months of deployment.

Fine-tuning open-source models provides the optimal balance of cost control and customization for most enterprise applications. Organizations can achieve 85-95% of proprietary model performance at 60% lower ongoing costs by investing in upfront development work. The custom software development process and structure follows similar patterns, where initial investment in custom solutions reduces long-term operational expenses.

Custom architectures make financial sense only for organizations with unique technical requirements that existing models cannot address, such as specialized medical diagnostics or financial risk modeling. These projects require dedicated ML research teams and multi-month development cycles, making them viable only for companies with substantial AI budgets and long-term strategic commitments to custom model development.

The decision framework should prioritize total cost of ownership over initial development costs. API solutions that seem cost-effective at launch often become budget burdens as usage scales, while custom solutions require higher upfront investment but provide predictable ongoing costs and full control over model capabilities and data handling.

Pros and Cons of Building Custom LLM Applications

Pros

Complete Data Control: Custom LLM applications keep sensitive business data within your infrastructure, meeting regulatory requirements and eliminating third-party data exposure risks that concern enterprise security teams.

Predictable Operating Costs: Self-hosted models eliminate per-API-call charges, making budget forecasting accurate and preventing usage-based cost escalation that affects high-volume applications.

Performance Optimization: Fine-tuned models achieve 15-30% better performance on domain-specific tasks compared to general-purpose APIs, reducing error rates and improving user satisfaction metrics.

Scalability Independence: Custom deployments handle traffic spikes without external rate limits or service availability dependencies that can disrupt business operations during peak usage periods.

Long-term Cost Efficiency: Organizations with sustained high usage volumes save 40-70% on inference costs compared to API-based solutions after the initial 18-month development payback period.

Cons

High Upfront Investment: Custom LLM development requires $50,000-$300,000 initial investment compared to $5,000-$15,000 for API integration, creating significant budget barriers for smaller organizations.

Technical Expertise Requirements: Successful implementation demands ML engineers, DevOps specialists, and data scientists with LLM experience, skills that command $180,000-$250,000 annual salaries in competitive markets.

Infrastructure Complexity: Managing GPU clusters, model versioning, and inference optimization requires specialized knowledge that most development teams lack, creating operational risks and maintenance overhead.

Extended Development Timeline: Custom solutions require 4-8 months from planning to production deployment compared to 2-6 weeks for API integrations, delaying product launches and time-to-market advantages.

Ongoing Maintenance Burden: Model updates, security patches, and performance optimization require dedicated engineering resources that add 20-30% to total cost of ownership beyond initial development expenses.

Step-by-Step Cost Planning Guide

Step 1: Define Use Case Requirements and Success Metrics

Establish specific performance benchmarks your LLM must achieve, including accuracy thresholds, response time requirements, and throughput expectations. Document the business processes the LLM will support and identify edge cases that could impact model performance. Most enterprise applications targeting production deployment require 85% accuracy or higher on core classification and routing tasks, with response time expectations typically under 3 seconds to maintain acceptable user experience—critical for applications like real-time fraud detection or customer service routing systems. Allocate 1-2 weeks for requirements gathering and stakeholder alignment before proceeding to technical planning phases.

Pro Tip:Requirements changes after development begins typically add 25-40% to total project costs, making thorough upfront planning essential for budget control.

Step 2: Estimate Data Collection and Preparation Costs

Calculate the volume of training data needed based on your use case complexity and desired model performance. Simple classification tasks may require 5,000-8,000 examples, while complex generation tasks need 10,000-15,000 examples for production-quality results. Factor in data labeling costs of $2-$10 per example for standard business applications, plus additional expenses for data cleaning, validation, and quality assurance processes.

Step 3: Select Infrastructure Configuration and Calculate Monthly Costs

Determine GPU requirements based on your chosen model architecture and expected inference volume. A single A100 GPU supports approximately 10,000-15,000 daily API calls for 7B parameter models, costing $2,500-$3,500 monthly on major cloud platforms. Multi-GPU setups required for larger models or higher throughput can cost $8,000-$15,000 monthly, making infrastructure planning critical for accurate cost forecasting.

Pro Tip: Reserve GPU instances 3-6 months in advance to avoid availability premiums that can increase costs by 30-50% during high-demand periods.

Step 4: Budget for Model Development and Fine-Tuning

Plan for 8-16 weeks of ML engineering work at $150-$200 hourly rates for model development, fine-tuning, and optimization. Include compute costs for training experiments, which typically consume 100-300 GPU hours for fine-tuning projects. Factor in multiple training runs for hyperparameter optimization and validation testing that ensure production-ready model performance.

Step 5: Account for Integration and Production Deployment

Estimate 4-8 weeks of software engineering work to integrate the LLM into existing systems and build production API endpoints. Include costs for monitoring systems, logging infrastructure, and backup procedures that support enterprise-grade reliability. Most organizations spend $20,000-$40,000 on production deployment engineering beyond core model development costs.

This systematic approach prevents the budget surprises that affect 70% of custom LLM projects by accounting for all cost components before development begins. The cost analysis for custom application development follows similar planning principles across different application types.

Custom LLM Development Checklist

Define success metrics: Establish specific accuracy, speed, and business impact targets before development begins to guide technical decisions and budget allocation.

Secure training data: Identify data sources, negotiate access permissions, and validate data quality meets model training requirements for your use case.

Reserve GPU infrastructure: Lock in compute resources 3-6 months ahead to avoid availability constraints that can delay projects and increase costs by 30-50%.

Assemble technical team: Hire or contract ML engineers with LLM experience, DevOps specialists for infrastructure management, and data scientists for model optimization.

Plan compliance requirements: Research regulatory obligations like HIPAA or SOC 2 that affect architecture decisions and add 25-40% to development timelines.

Budget for data preparation: Allocate 40-50% of total budget to data labeling, cleaning, and validation processes that determine model effectiveness.

Design production architecture: Plan inference infrastructure, API endpoints, and monitoring systems that can handle expected traffic with sub-3-second response times.

Establish testing procedures: Create validation datasets and A/B testing frameworks to measure real-world performance before full production deployment.

Document maintenance plans: Define model update procedures, retraining schedules, and support processes that ensure long-term system reliability and performance.

Best Practices for Cost Management

Start with Minimal Viable Models

Begin development with smaller models (7B parameters) that require less infrastructure and shorter training cycles, then scale up only if performance benchmarks cannot be met with lighter architectures. Companies that start with 13B or larger models often discover that 7B models achieve acceptable performance at 60% lower infrastructure costs. This approach reduces both initial development expenses and ongoing operational costs while providing faster iteration cycles during development phases.

Implement Staged Data Collection

Collect training data in phases rather than attempting to gather complete datasets upfront, allowing model performance validation with smaller datasets before investing in comprehensive labeling efforts. Start with 2,000-3,000 examples for initial model training, evaluate performance against business requirements, then expand datasets based on identified weaknesses. This prevents over-investment in data preparation for use cases where simpler approaches may suffice.

Use Hybrid Inference Strategies

Deploy fine-tuned models for specialized tasks while routing general queries to cost-effective API services, optimizing the cost-performance balance across different use case categories. [Illustrative example] A customer support system routes 70% of standard inquiries to a fine-tuned model costing $0.02 per query while escalating complex cases to GPT-4 at $0.08 per query, reducing average inference costs from $0.08 to $0.034 per interaction.

Monitor and Optimize Continuously

Track model performance metrics, infrastructure utilization, and cost per query to identify optimization opportunities that reduce operational expenses without compromising user experience. Implement automated scaling policies that adjust GPU allocation based on traffic patterns, potentially reducing infrastructure costs by 20-35% during low-usage periods. Regular performance monitoring also identifies model drift that could degrade accuracy over time, triggering retraining processes before business impact occurs.

Plan for Model Evolution

Budget 15-25% of annual operating costs for model updates, retraining cycles, and performance improvements that maintain competitive advantage as business requirements evolve. Companies that neglect ongoing model maintenance often face performance degradation that necessitates expensive emergency upgrades or complete model replacements within 18-24 months of initial deployment.

Current Market Data and Insights

Custom LLM development costs increased 45% between 2023 and 2024, primarily driven by GPU shortage premiums and increased demand for specialized ML engineering talent. Organizations planning LLM projects in 2025 face infrastructure costs that are 60-80% higher than pre-2023 levels, making accurate cost forecasting essential for project approval and budget management.

Data preparation represents a substantial portion of total project costs in enterprise LLM implementations—typically 35-50% depending on data maturity. For illustration, a logistics company implementing invoice processing allocated $280K of its $700K budget to data extraction, standardization, and quality assurance before model training. Companies that allocate adequate resources to data quality achieve 23% higher model performance and 35% fewer post-deployment issues compared to those focusing primarily on model architecture and training processes.

Fine-tuning approaches deliver 67% cost savings over custom model development for most business applications, while achieving comparable performance on domain-specific tasks. Organizations choosing fine-tuning over custom architectures complete projects 3-5 months faster and require 40% fewer specialized engineering resources, making this the preferred approach for cost-conscious technical teams.

Infrastructure costs consume 28% of total LLM project budgets on average, but this percentage varies significantly based on usage patterns and performance requirements. High-throughput applications processing over 50,000 daily queries see infrastructure costs rise to 45-55% of total expenses, while lower-volume applications typically maintain infrastructure costs below 20% of overall budgets.

ROI payback periods average 14-18 months for custom LLM applications that achieve target performance metrics and usage volumes. Organizations with clearly defined success metrics and structured deployment plans achieve positive ROI 40% faster than those with exploratory or research-oriented approaches to LLM implementation.

Real-World Example: Customer Support LLM Implementation

A mid-market SaaS company processing 15,000 monthly support tickets implemented a custom LLM application to reduce response times and improve first-contact resolution rates. Their customer support team was spending 8-12 hours daily on routine inquiries that could be automated, while complex technical issues required extended back-and-forth with customers to gather necessary context.

The implementation focused on fine-tuning a Llama 2 7B model using 6,800 labeled support interactions spanning 18 months of customer correspondence. Data preparation required 12 weeks and cost $41,000 for labeling and quality assurance processes, while infrastructure setup consumed an additional $18,000 for GPU provisioning and development environment configuration. Model training and optimization phases required 8 weeks of ML engineering work at $32,000 total cost.

The deployed system achieved a 38% improvement in first-response accuracy and reduced ticket escalation rates by 23% within the first quarter of operation. Monthly infrastructure costs of $4,200 were offset by labor savings of approximately $15,000 monthly, creating a net positive cash flow within 6 months of deployment. The total project investment of $91,000 generated measurable ROI through reduced support staff overtime and improved customer satisfaction metrics that correlated with 12% lower churn rates during the following year.

Key Takeaways

- Custom LLM application costs range from $50,000-$300,000 for initial development, with data preparation consuming 40-50% of total budgets across most enterprise projects.

- Infrastructure costs scale exponentially with model size and usage volume, requiring careful capacity planning to avoid budget overruns during traffic growth periods.

- Fine-tuning existing open-source models costs 60-80% less than custom architectures while achieving comparable performance for most business applications.

- ROI payback periods average 14-18 months for successful implementations, making thorough cost-benefit analysis essential before committing engineering resources.

- GPU shortage premiums have increased infrastructure costs by 45-60% since 2023, making early resource reservation critical for budget control and timeline management.

- Organizations that allocate 40-50% of budget to data preparation achieve 23% higher model performance and 35% fewer post-deployment issues compared to teams focusing primarily on model architecture.

Frequently Asked Questions

Custom LLM application development typically costs $50,000-$300,000 for initial implementation, with ongoing monthly infrastructure costs of $3,000-$25,000 depending on usage volume and model complexity. Data preparation represents the largest single expense, consuming 40-50% of total project budgets for most enterprise applications.What is the difference between fine-tuning an LLM and training from scratch?

Fine-tuning adapts existing pre-trained models to specific use cases at 60-80% lower cost than training from scratch, requiring weeks rather than months for development. Custom training builds entirely new model architectures, costing $200,000-$500,000+ but providing complete control over model capabilities and intellectual property ownership.

Conclusion

Custom LLM application costs depend on data complexity, infrastructure scale, and performance requirements rather than model selection alone. The organizations achieving positive ROI within 18 months invest heavily in data preparation and infrastructure planning while avoiding the allure of unnecessarily complex architectures that exceed business requirements.

The key insight driving successful projects is treating LLM development as an engineering discipline focused on measurable business outcomes rather than an experimental research endeavor. Teams that allocate resources appropriately across data preparation, infrastructure, and ongoing maintenance create systems that deliver sustained value rather than impressive demonstrations.

Technical leaders evaluating custom LLM investments should focus on total cost of ownership calculations that include data preparation, infrastructure scaling, and maintenance overhead. The AI-powered custom application case study demonstrates how thorough cost planning enables organizations to achieve significant results while maintaining budget discipline and timeline accountability.

Pro Tip: Start with a clearly defined pilot project processing 5,000-10,000 examples to validate both technical feasibility and business impact before committing to full-scale custom LLM development.

Ready to build a custom LLM application that delivers measurable business results without budget surprises? Our team specializes in transparent cost planning and proven development methodologies that keep projects on track and within budget. Get Started Now to discuss your specific requirements and receive a detailed cost breakdown tailored to your use case and infrastructure needs.